We’ve all heard of 802.1Q or for simplicity Dot1q and the range of 0 to 4095, but have you seen traffic tagged with VLAN ID 0? We’re here to talk about VLAN 0, Wireshark and traffic capturing when it comes to Citrix NetScaler.

DOT1Q

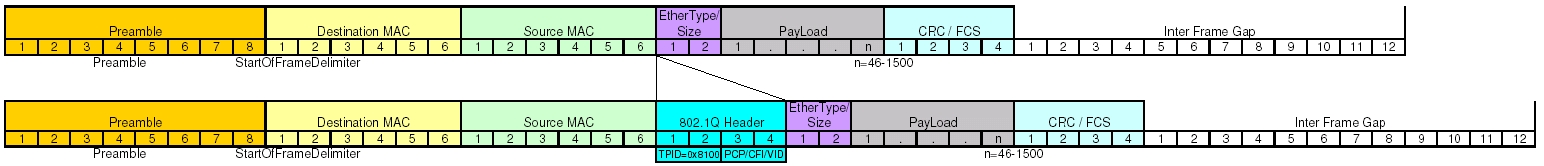

Dot1q adds a 32-bit field between the source MAC address and the EtherType fields of the original frame. Due to some tag identifier paddings, we’re left with 12 bits for VLAN ID. The values of 0 and 4095 (0x000 and 0xFFF in hexadecimal) are reserved. All other values from 1 to 4094 may be used as VLAN identifiers.

There could be a VLAN ID 0 traffic indicating that the packet does not carry a VLAN ID, but only priority tags (PCP and DEI fields called Class of Service tags), which are part of the whole 802.1Q tag. This could be a valid situation and the receiving device should treat such packet as it’s untagged, regardless of the port’s config – access or trunk with native VLAN.

Diagram Image By Bill Stafford - Own work, CC BY-SA 3.0, Link

VLAN 0

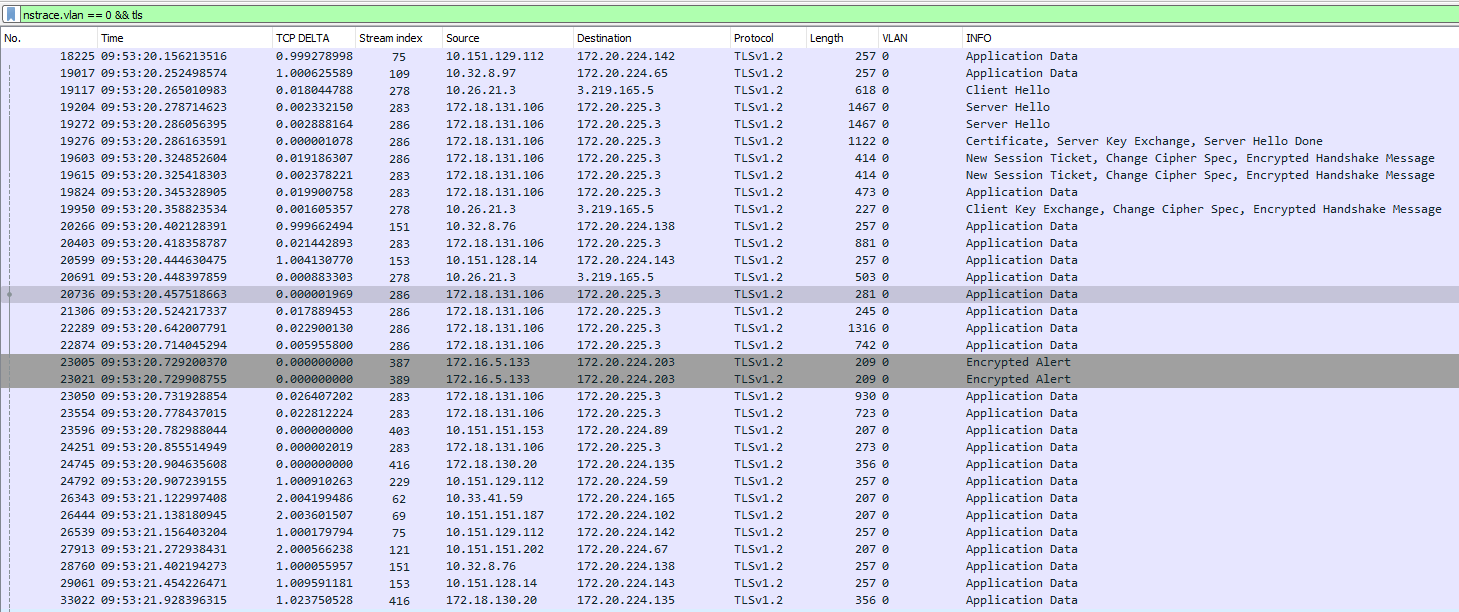

Take unfiltered trace on the NetScaler appliance. Filter your NetScaler trace in Wireshark by VLAN 0. The obvious qustion how does the NetScaler make use of VLAN 0?

nstrace.vlan == 0

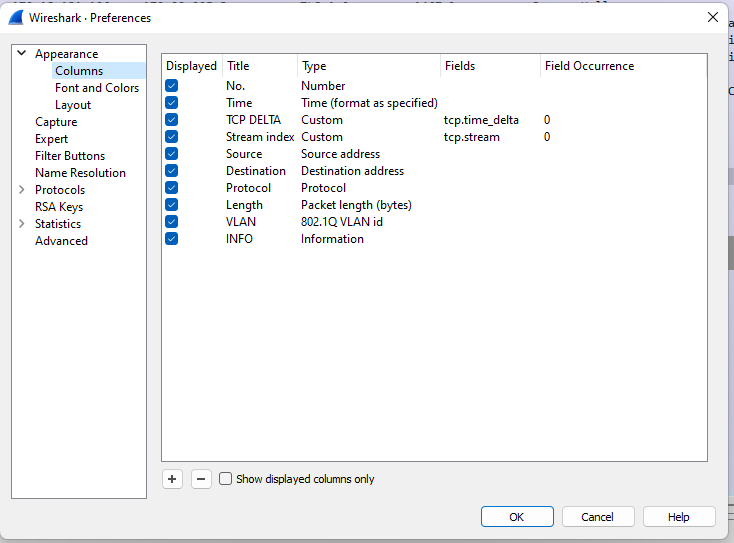

I highly recommend adding at least the following additional columns to your Wireshark for troubleshooting purposes.

- TIME

- TCP DELTA

- TCP STREAM

- 802.1Q VLAN id

Shown on the picture below:

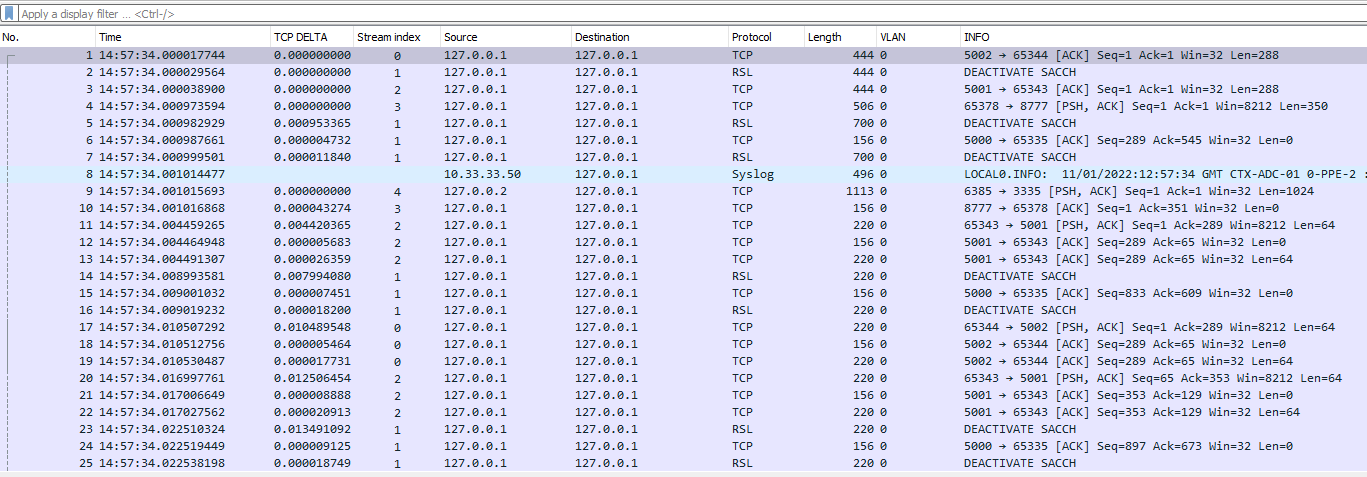

Filtering or not the trace, you’ll notice almost all traffic in VLAN 0 is sourced from 127.0.0.1 to 127.0.0.2. RSL, Syslog, etc. Here and there you can see the MGMT IP (10.33.33.50 on the picture below). It looks like internal bridge communication for the management from one loopback address to another. This is perfectly fine, it will not be the first device using it with such purpose.

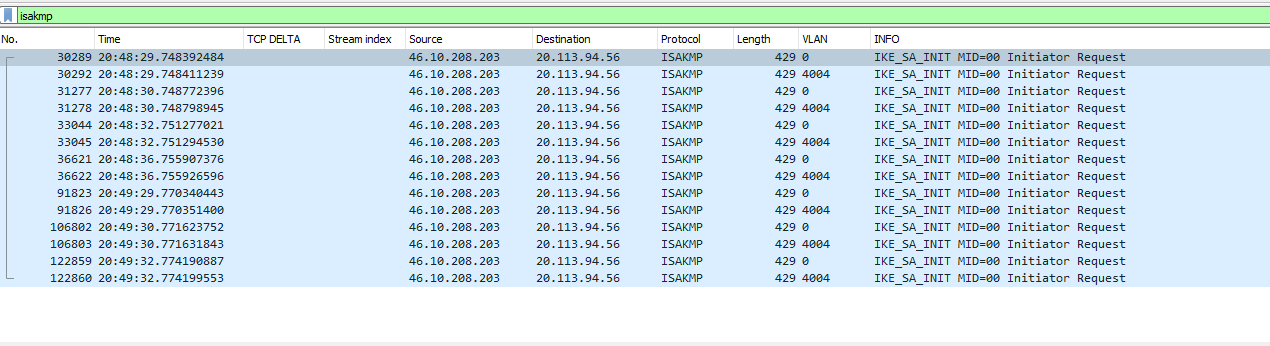

On a closer look I found IPSEC traffic with IPSEC IKE_SA_INIT originating twice from VLAN 0 & VLAN 4004 (the latter the VLAN configured on the box). Without loopback addresses this time. Looks almost as VLAN 0 traffic is duplicate of the real traffic and the IKEv2 initial exchange. Why do we have it twice? My first assumption was the CloudBridge (IPSEC) functionality isn’t exactly Client-Server traffic and for one reason or another should passthrough that internal management bridge.

But if we keep examining the trace, we’ll find TLS and HTTP traffic in VLAN 0. These sessions are also duplicates of real traffic in their respective VLAN and that’s definitely Client-Server Traffic. The weird thing is that not all sessions have duplicates in VLAN 0. Why is that?

Software Packet Steering

This happens when the NetScaler performs software steering from one packet engine to another. The NetScaler must have multiple data cores assigned in first place. You can check the Core handler in the trace with the below filter. For certain types of traffic, the NetScaler must perform it even when same core is the initial handler. Usually happens in Frontend - Backend TCP session handling. The Frontend TCP session and the backend TCP session to be handled by the same packet engine (CPU).

nstrace.coreid == x

You can also trace the behavior with the following counter incrementing:

nsconmsg -s disptime=1 -d current -g rssf_tot_filters | more

This behavior has one major drawback. Wireshark’s interpretation of traffic will be wrong for TCP based sessions. All of a sudden there are TCP Retransmissions and Out-Of-Order packets. You can no longer rely on Wireshark’s labeling as "TCP Retransmission" as the TCP sessions are seen twice. That’s why you would generally exclude VLAN 0 information from the trace by including the following filter:

nstrace.vlan != 0

It’s almost the situation with traces capturing both packets buffered for transmission and transmitted packets.

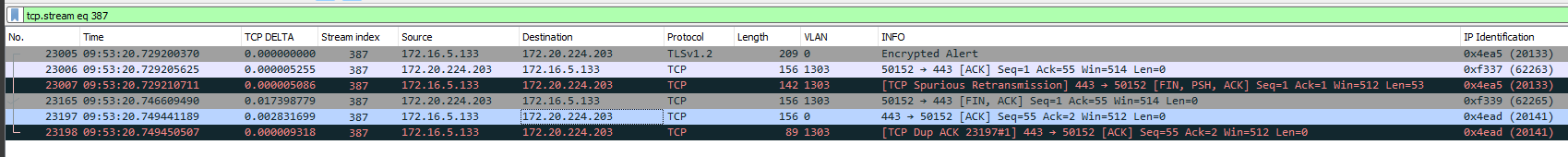

You can see on the picture below that last ACK packet is seen twice on VLAN 0 & VLAN 1303. Because of this Wireshark interprets the packet as TCP Dup ACK. But that’s not true, the client has not sent second ACK. This makes reading Wireshark traces extremely difficult.

You can exclude VLAN 0 traffic with the following expression on NetScaler:

CONNECTION.VLANID.EQ(0).NOT

Conclusion

VLAN 0 Traffic can be safely ignored, but it can really make your troubleshooting harder.

Thank you for reading!